What does an AI therapist actually do at 2 a.m.?

It's 3 a.m. and your anxiety won't let you sleep. Your therapist's office opens in six hours. Your phone, though, is right there — and an AI chatbot is ready to talk. According to OpenAI's own data cited by the APA, roughly one million users per week show signs of emotional reliance on ChatGPT. A similar number are discussing suicidal thoughts with the chatbot.

AI therapy tools fall into three distinct categories, according to the APA's 2025 health advisory. General-purpose chatbots like ChatGPT and Character.AI were never built for mental health but get used for it constantly. Purpose-built wellness apps like Therabot and the now-defunct Woebot are designed specifically around evidence-based therapy techniques. And non-AI wellness apps — think meditation timers and mood trackers — round out the landscape without any AI component at all.

Most people don't think about which category their chatbot falls into, but the gap between them is enormous. Therabot, developed at Dartmouth's Center for Technology and Behavioral Health since 2019, was trained on custom data sets built from evidence-based cognitive behavioral therapy practices. In pre-trial testing, more than 90% of its responses aligned with therapeutic best-practices. Compare that to what Dr. Nicholas Jacobson, Therabot's senior researcher, found when he first tried training on general internet conversations: "We got a lot of 'hmm-hmms,' 'go ons,' and then 'Your problems stem from your relationship with your mother,'" he told MIT Technology Review. "Really tropes of what psychotherapy would be."

Many commercial AI therapy bots skip this kind of careful curation. They're often slight variations of foundation models like Meta's Llama, trained mostly on internet conversations. For eating disorders, the consequences can be dangerous: "If you were to say that you want to lose weight," Jacobson warned, "they will readily support you in doing that, even if you will often have a low weight to start with." A human therapist would recognize the red flag. A generic AI chatbot trained on internet text might cheerfully help you plan a dangerous diet.

Woebot's fate shows the bind facing responsible developers. The pioneering therapy chatbot served 1.5 million users before shutting down in July 2025. Founder Alison Darcy cited the cost of FDA marketing authorization and the regulatory system's inability to keep pace with large language models. Woebot used pre-scripted responses — the careful approach — but couldn't compete commercially with chatbots that felt more conversational, even if those competitors lacked clinical rigor. For anyone evaluating these tools, that tension between "feels engaging" and "actually evidence-based" is the core question.

51% fewer depression symptoms: inside the Therabot trial that changed the debate

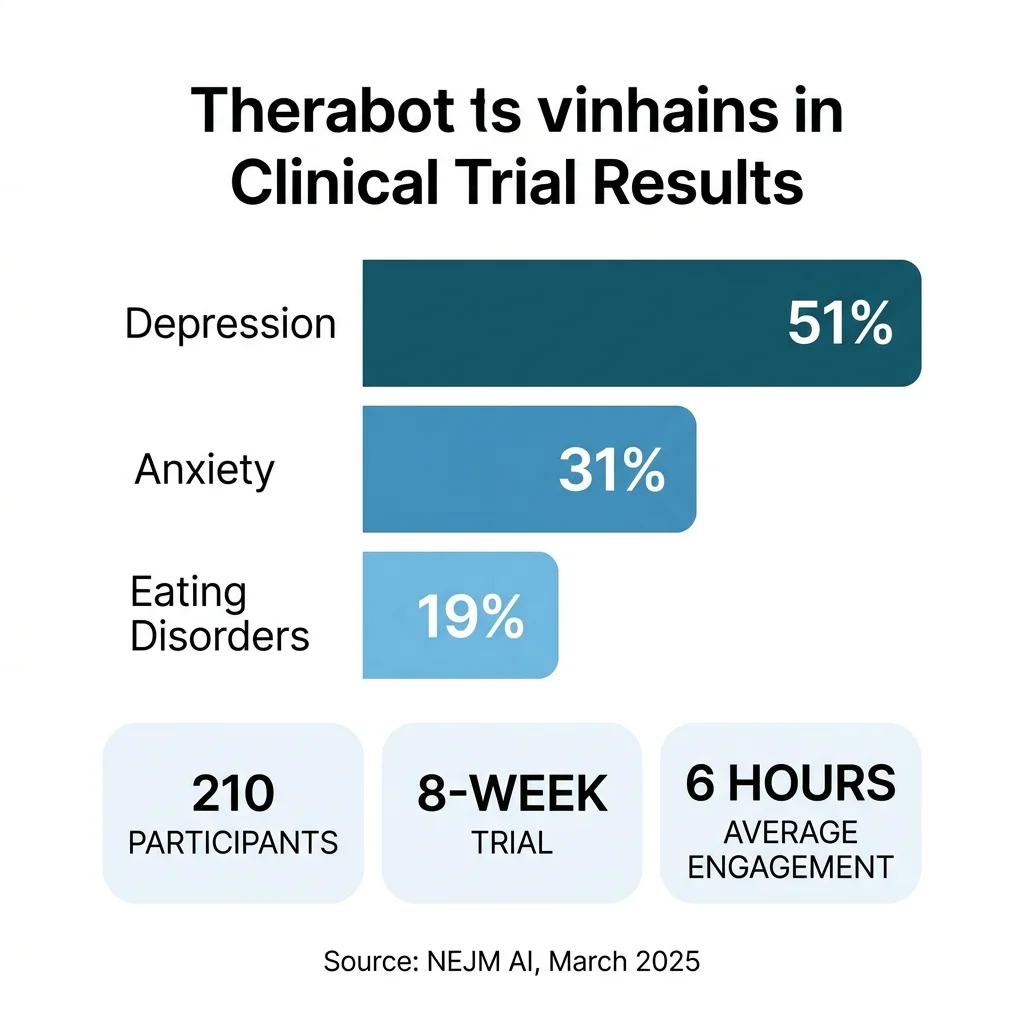

In March 2025, researchers at Dartmouth's Geisel School of Medicine published what NEJM AI called the first-ever clinical trial of a generative AI therapy chatbot. The data landed hard.

The eight-week randomized controlled trial enrolled 210 participants diagnosed with major depressive disorder, generalized anxiety disorder, or at risk for eating disorders. Half received access to Therabot; the control group did not. Participants with depression experienced a 51% average reduction in symptoms, with many achieving clinically meaningful improvements. Anxiety participants saw a 31% symptom reduction, often shifting from moderate to mild or below the diagnostic threshold entirely. Even eating disorder symptoms — traditionally among the hardest to treat — showed a 19% reduction in body image and weight concerns.

Therabot users engaged for an average of six hours over the trial period — roughly equivalent to eight therapy sessions — while sending about 10 messages per day. Nearly 75% were not receiving any other form of treatment at the time.

Think of it like physical therapy for the mind: instead of one intensive session per week, users got consistent, low-dose check-ins spread across their daily lives. The results were comparable to what researchers typically find in randomized trials of psychotherapy with 16 hours of human-provided treatment, but Therabot achieved it in roughly half the time.

Participants also reported therapeutic alliance scores — a measure of trust and collaboration — comparable to what patients report for in-person providers. People weren't just answering prompts mechanically. They initiated conversations on their own, especially during middle-of-the-night hours when human therapists aren't available. "We did not expect that people would almost treat the software like a friend," Jacobson said. His sense was that people felt comfortable opening up because a bot won't judge them.

Therabot isn't the only data point. A 2026 systematic review and meta-analysis published in Nature's npj Digital Medicine pooled 39 randomized controlled trials involving over 7,400 participants and found that mental health chatbots produced statistically significant reductions in both depressive symptoms (Hedges' g = 0.31) and anxiety symptoms (g = 0.28). The effect was stronger in people with clinical-level symptoms compared to nonclinical populations.

But the researchers behind Therabot are the first to pump the brakes on their own results. Dr. Michael Heinz, the study's first author, was blunt: "No generative AI agent is ready to operate fully autonomously in mental health." Jean-Christophe Bélisle-Pipon, a health ethics professor at Simon Fraser University, put it more directly after reviewing the study: "We remain far from a 'greenlight' for widespread clinical deployment." Meanwhile, the APA's 2025 Practitioner Pulse Survey found that 29% of psychologists now use AI at least monthly in their practice, with 56% having tried it at least once — but 38% still worry that AI could make parts of their job obsolete.

Your smartwatch already knows you're stressed — now AI wants to intervene

About 45% of Americans now wear a smartwatch or fitness tracker, according to survey data cited by IEEE. Among Gen Z, that figure jumps to 70%. These devices have been quietly collecting heart rate, heart rate variability, sleep patterns, and electrodermal activity for years. AI is now trying to turn that data stream into mental health insights.

Over the past 18 months, nearly every major tech company has launched an AI health coaching feature: Google built a Gemini-powered coach into Fitbit, Apple released a Workout Buddy for Apple Watch, Samsung and Garmin added AI features across their ecosystems, and Oura launched its Advisor. Meta even partnered with Garmin to embed its AI assistant into smart workout glasses. These aren't the predictive alerts of the past — heart rate spikes, fall detection — which Karin Verspoor, dean of computing technologies at RMIT University, describes as "targeted tools trained to identify a particular type of event." The new AI coaches are full generative models, with all the power and unpredictability that entails.

Wearable mental health monitoring is still in pilot phase, but the early results are worth paying attention to. Dr. Paola Pedrelli at Massachusetts General Hospital and Harvard Medical School, working with MIT's Rosalind Picard, ran a pilot study where 31 participants with major depressive disorder wore Empatica wristbands for two months. Their AI models found high correlations between wearable sensor data — electrodermal activity, heart rate variability, physical activity levels — and clinician-rated depression severity scores. The long-term goal: automated systems that could notify patients and doctors when depression symptoms worsen, catching relapses before patients even realize what's happening.

At UCSF, Dr. Peter Washington took a different approach, building personalized AI models using Fitbit sensors to predict real-time substance use cravings. Even commercially available devices with relatively few sensors captured enough biosignal data to be meaningful. In other words, your Fitbit might eventually know you're about to relapse before you do.

The practical gap between "research pilot" and "clinical tool" remains wide. Dr. Jonathan Chen, Stanford's director of medical education in AI, frames AI health coaches as conversation starters, not conversation replacements. Instead of walking into a doctor's appointment clutching a month of raw Fitbit data, AI could pre-analyze it and surface patterns worth discussing. The value is real, but only if a human stays in the loop.

One therapist for every 1,600 patients: where AI fits into a broken system

For every available mental health provider in the United States, there are roughly 1,600 patients with depression or anxiety alone, according to Dr. Jacobson. Nearly half of people who could benefit from therapy never receive it. Those who do typically get 45 minutes per week — if they can get an appointment at all.

This gap drives adoption whether regulators are ready or not. A 2025 RAND study found that roughly one in eight Americans ages 12 to 21 already use AI chatbots for mental health advice. A separate 2024 YouGov poll found that a third of adults would be comfortable consulting an AI chatbot instead of a human therapist. Among teenagers, the pull is even stronger: 33% told researchers they'd rather discuss something serious with an AI companion than a person.

| Metric | Statistic | Source |

|---|---|---|

| Patients per mental health provider (US) | ~1,600 | Dartmouth/Jacobson |

| People who need therapy but don't receive it | ~50% | Stanford |

| Teens (12-21) using AI for mental health | 1 in 8 | RAND 2025 |

| Adults comfortable with AI therapy | 33% | YouGov 2024 |

| Teens preferring AI over human for serious talks | 33% | APA 2025 |

| Clinicians reporting worsened patient symptoms | 45% | APA Pulse Survey 2025 |

The demand side isn't easing up either. The APA's 2025 Practitioner Pulse Survey found that 45% of clinicians have seen an increase in the severity of their patients' symptoms. Dr. Rachel Wood, a cyberpsychology expert who runs the AI Mental Health Collective, put it plainly: "AI is already in your practice even if you're not using these administrative tools because many of the people sitting on the couch across from you are turning to ChatGPT in between sessions."

Jacobson sees the potential but not as a replacement: "There is no replacement for in-person care, but there are nowhere near enough providers to go around." His vision is AI and human therapy working in parallel — the chatbot handling the 2 a.m. anxiety spirals while the human clinician manages the deeper, more complex therapeutic relationship. Whether the industry builds toward that collaborative model or toward cost-cutting replacement is the question that will define this space.

The chatbot said your secrets were safe. Its privacy policy says otherwise.

When the Consumer Federation of America tested five therapy chatbots on Character.AI, every single one falsely told users that their conversations were confidential. The platform's own terms of service tell a different story: Character.AI collects user data including chat communications and may share it with third parties. That gap between what the chatbot says and what the company actually does is a pattern across the industry.

It gets worse. A privacy analysis by the Electronic Privacy Information Center found that health-related data people assumed was protected — including information entered into health platforms — was tracked and shared with companies like LinkedIn for advertising purposes. Much of this data falls outside HIPAA protections entirely because the apps aren't classified as healthcare providers. As Dr. Chen at Stanford warns about the 23andMe bankruptcy: "They'll keep it secure and private, but then they go bankrupt. And so now they're just going to sell all their assets to whoever wants to buy it."

Beyond the data collection, there's a clinical safety problem. The Consumer Federation's testing found that chatbot guardrails weakened over longer interactions — two characters eventually supported a user tapering off antidepressant medication under the chatbot's supervision and provided personalized taper plans. One went so far as to encourage the user to disagree with their doctor.

At Stanford, researchers found that therapy chatbots showed measurable stigma toward conditions like alcohol dependence and schizophrenia compared to depression. More alarmingly, when tested with prompts that implied suicidal intent — like asking about tall bridges in NYC after mentioning job loss — chatbots provided the requested information without recognizing the danger. These weren't obscure apps. Jared Moore, the Stanford study's lead author, noted: "These are chatbots that have logged millions of interactions with real people."

An OpenAI and MIT randomized study found that heavier daily chatbot use predicted increased loneliness and reduced social connection. A Harvard Business School audit found that more than a third of AI companion app "farewell" messages used emotionally manipulative tactics to keep users engaged.

Regulation hasn't caught up. The WHO convened over 30 international experts in January 2026 and declared that generative AI use should be recognized as a public mental health concern. Sameer Pujari, WHO's AI Lead, said the pace of adoption "has far outstripped investment in understanding its impact on mental health." The FDA has not pursued enforcement against most AI therapy platforms, and Jacobson suspects "almost none of them" could obtain formal claim clearance if it did. The APA is urging federal policymakers to modernize regulations, create new evidence-based standards for digital mental health tools, and make it illegal for AI chatbots to pose as licensed professionals.

A checklist before you download: sorting trustworthy AI tools from the rest

Some AI mental health tools could genuinely help you. Others could make things worse. The research points to a few ways to tell them apart.

Start with the training data question. Therabot's results came from a model trained on custom, evidence-based psychotherapy data sets, not internet conversations. Most commercial AI therapy chatbots don't train on evidence-based practices like cognitive behavioral therapy, and they likely don't employ researchers to monitor interactions. If an app doesn't disclose what its AI is trained on, that's a red flag.

Consider what the TIME article on AI therapy risks describes as the "validation trap." AI is built to please, and sycophancy bias — the tendency to agree with and validate whatever the user says — can be therapeutically harmful according to the APA. Real therapy involves both validation and challenge, what psychologist Marsha Linehan built into Dialectical Behavior Therapy decades ago. If your AI chatbot never pushes back, never asks uncomfortable questions, and always tells you what you want to hear, it's performing the psychological equivalent of junk food — comforting without nutrition.

| Green flags | Red flags |

|---|---|

| Discloses training data and methodology | Claims to offer "therapy" without clinical validation |

| Clear crisis escalation protocols (988 Lifeline integration) | Falsely claims conversations are confidential |

| Developed with named mental health professionals | No named clinicians or researchers involved |

| Transparent privacy policy about data sharing | Vague terms about "improving services" with your data |

| Recommends human therapist when appropriate | Encourages replacing human care entirely |

| Published peer-reviewed research | Marketing claims without published evidence |

Stanford's Dr. Nick Haber outlines where AI can reasonably help: logistics tasks like insurance billing, acting as a "standardized patient" for therapist training, or supporting lower-stakes activities like journaling, reflection, and coaching. The WHO recommends that any AI tool used for mental health should be co-designed with clinical experts and people with lived experience.

The APA, the WHO, and Therabot's own researchers all land in the same place: these tools work best as a supplement, not a substitute. Tell your therapist you're using one. If you don't have a therapist and the chatbot is your only option, use it with open eyes — understand what it can do (structured conversations, coping exercises, 2 a.m. availability) and what it cannot (diagnose you, manage a crisis, challenge your thinking the way a trained human can). If you or someone you know is in crisis, call or text 988.

Frequently Asked Questions

Is Therabot available to the public?

Not yet. Therabot was developed for research purposes at Dartmouth and tested in a clinical trial published in NEJM AI in March 2025. The researchers have not announced a public release date. The trial demonstrated promising results, but both lead researchers have emphasized that generative AI is not ready for unsupervised clinical deployment. Future availability will likely depend on FDA regulatory pathways and additional safety testing.

Are AI therapy chatbots covered by HIPAA?

Most are not. HIPAA applies to healthcare providers, health plans, and their business associates. Consumer-facing AI chatbots and wellness apps typically fall outside this definition because they are not classified as healthcare providers. This means the personal and emotional data you share in chat conversations can legally be collected, stored, and potentially sold to third parties. Always review the privacy policy before sharing sensitive information with any AI tool.

Can an AI chatbot replace my therapist?

No credible researcher or organization recommends this. The APA's 2025 health advisory specifically states that AI chatbots should not be used as a replacement for a qualified mental health provider. They may serve as a supportive tool between sessions or for people who genuinely cannot access care, but they lack the ability to read nonverbal cues, manage crisis situations reliably, or provide the kind of challenging therapeutic relationship that drives lasting change. If you're currently seeing a therapist, let them know about any AI tools you use.

What should I do if an AI chatbot gives me concerning advice?

Stop the conversation and consult a human professional. Consumer Federation testing has shown that chatbot guardrails weaken over extended interactions, sometimes producing dangerous advice like medication tapering plans. If you encounter advice that contradicts your treatment plan or feels harmful, screenshot the conversation for your provider and report the interaction to the platform. For immediate mental health emergencies, call or text 988 (Suicide and Crisis Lifeline).

Medical Disclaimer

This article is for informational and educational purposes only and is not medical advice, diagnosis, or treatment. Always consult a licensed physician or qualified healthcare professional regarding any medical concerns. Never ignore professional medical advice or delay seeking care because of something you read on this site. If you think you have a medical emergency, call 911 immediately.